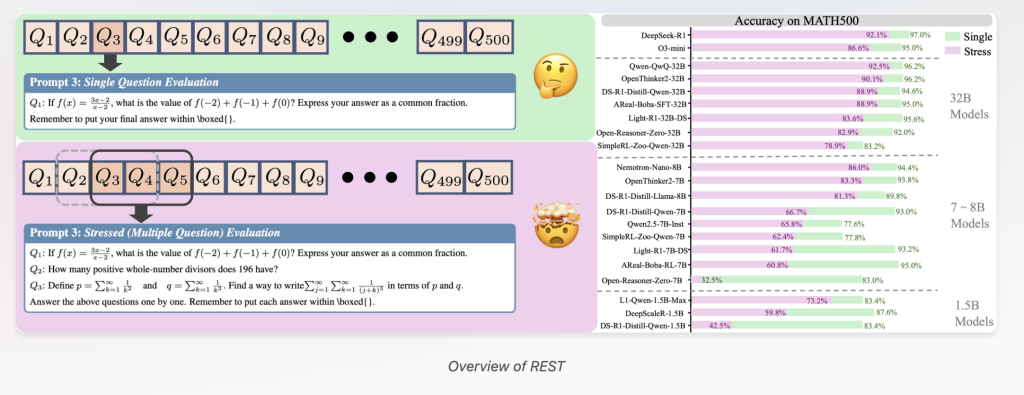

Large Reasoning Fashions (LRMs) have rapidly superior, exhibiting spectacular effectivity in difficult problem-solving duties all through domains like arithmetic, coding, and scientific reasoning. Nonetheless, current evaluation approaches primarily cope with single-question testing, which reveals important limitations. This textual content introduces REST (Reasoning Evaluation by means of Simultaneous Testing) — a novel multi-problem stress-testing framework designed to push LRMs previous isolated problem-solving and better mirror their real-world multi-context reasoning capabilities.

Why Current Evaluation Benchmarks Fall Transient for Large Reasoning Fashions

Most modern benchmarks, resembling GSM8K and MATH, think about LRMs by asking one question at a time. Whereas environment friendly for preliminary model development, this isolated question technique faces two important drawbacks:

- Decreasing Discriminative Power: Many state-of-the-art LRMs now acquire near-perfect scores on in type benchmarks (e.g., DeepSeek-R1 reaching 97% accuracy on MATH500). These saturated outcomes make it increasingly troublesome to distinguish true model enhancements, forcing the expensive, regular creation of extra sturdy datasets to tell apart capabilities.

- Lack of Precise-World Multi-Context Evaluation: Precise-world functions — like educational tutoring, technical help, or multitasking AI assistants — require reasoning all through quite a few, doubtlessly interfering questions concurrently. Single-question testing doesn’t seize these dynamic, multi-problem challenges that mirror true cognitive load and reasoning robustness.

Introducing REST: Stress-Testing LRMs with Plenty of Points at As quickly as

To cope with these challenges, researchers from Tsinghua Faculty, OpenDataLab, Shanghai AI Laboratory, and Renmin Faculty developed REST, a simple however extremely efficient evaluation methodology that concurrently checks LRMs on quite a few questions bundled proper right into a single rapid.

- Multi-Question Benchmark Reconstruction: REST repurposes current benchmarks by concatenating quite a few questions into one rapid, adjusting the stress stage parameter that controls what variety of questions are provided concurrently.

- Full Evaluation: REST evaluates important reasoning competencies previous main problem-solving — along with contextual priority allocation, cross-problem interference resistance, and dynamic cognitive load administration.

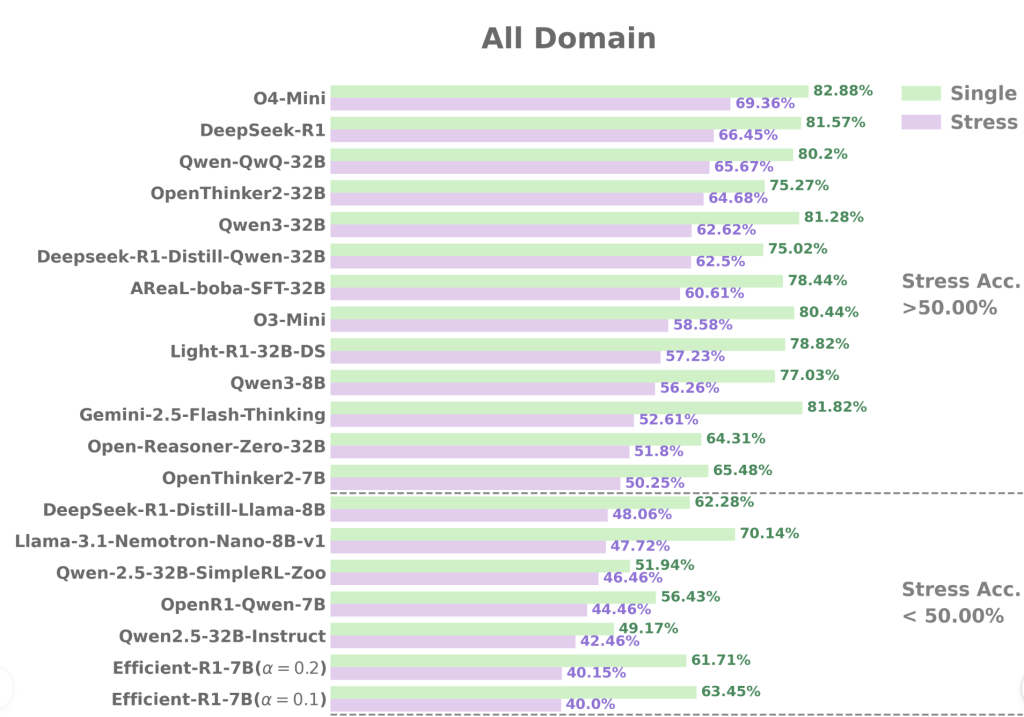

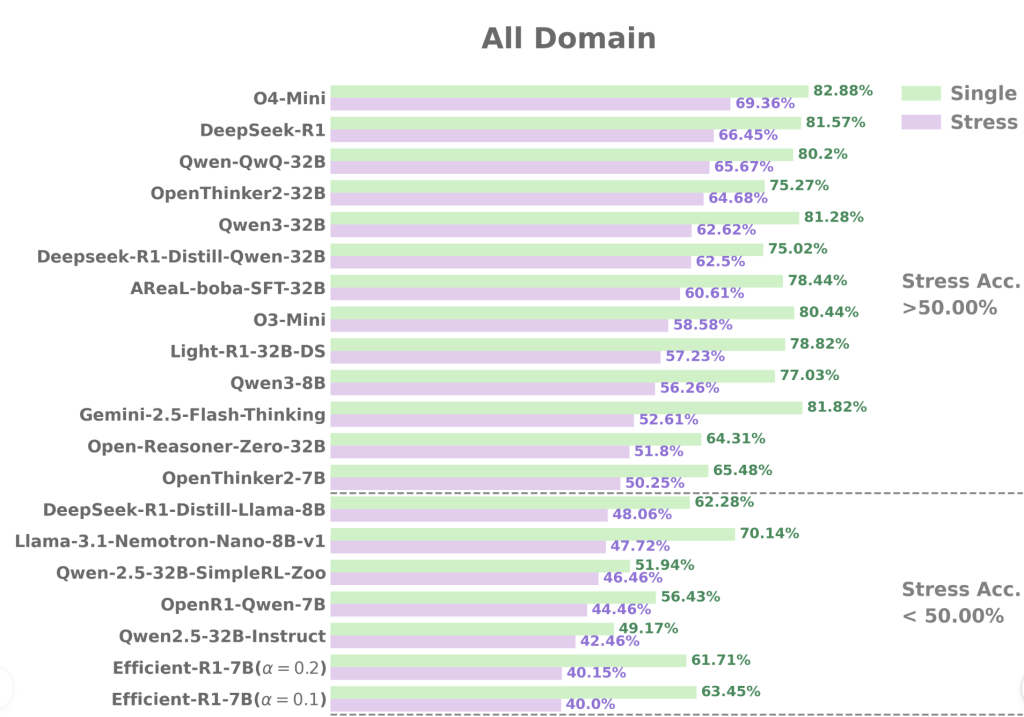

- Huge Applicability: The framework is validated on 34 superior LRMs ranging from 1.5 billion to 671 billion parameters, examined on 7 quite a few benchmarks all through varied drawback ranges (from simple GSM8K to troublesome AIME and GPQA).

REST Reveals Key Insights About LRM Reasoning Skills

The REST evaluation uncovers quite a few groundbreaking findings:

1. Vital Effectivity Degradation Beneath Multi-Draw back Stress

Even state-of-the-art LRMs like DeepSeek-R1 current notable accuracy drops when coping with quite a few questions collectively. For example, DeepSeek-R1’s accuracy on troublesome benchmarks like AIME24 falls by nearly 30% beneath REST compared with isolated question testing. This contradicts prior assumptions that big language fashions are inherently in a position to effortlessly multitasking all through points.

2. Enhanced Discriminative Power Amongst Associated Fashions

REST dramatically amplifies the variations between fashions with near-identical single-question scores. On MATH500, as an illustration:

- R1-7B and R1-32B acquire shut single-question accuracies of 93% and 94.6%, respectively.

- Beneath REST, R1-7B’s accuracy plummets to 66.75% whereas R1-32B maintains a extreme 88.97%, revealing a stark 22% effectivity gap.

Equally, amongst same-sized fashions like AReaL-boba-RL-7B and OpenThinker2-7B, REST captures important variations in multi-problem coping with expertise that single-question evaluations masks.

3. Submit-Teaching Methods Would possibly Not Guarantee Sturdy Multi-Draw back Reasoning

Fashions fine-tuned with reinforcement learning or supervised tuning on single-problem reasoning normally fail to guard their advantages in REST’s multi-question setting. This requires rethinking teaching strategies to optimize reasoning robustness beneath cheap multi-context conditions.

4. “Long2Short” Teaching Enhances Effectivity Beneath Stress

Fashions educated with “long2short” methods — which encourage concise and surroundings pleasant reasoning chains — preserve larger accuracy beneath REST. This implies a promising avenue for designing fashions greater suited to simultaneous multi-problem reasoning.

How REST Stimulates Actual trying Reasoning Challenges

By rising the cognitive load on LRMs by means of simultaneous downside presentation, REST simulates real-world requires the place reasoning methods ought to dynamically prioritize, avoid overthinking one downside, and resist interference from concurrent duties.

REST moreover systematically analyzes error varieties, revealing frequent failure modes resembling:

- Question Omission: Ignoring later questions in a multi-question rapid.

- Summary Errors: Incorrectly summarizing options all through points.

- Reasoning Errors: Logical or calculation errors contained in the reasoning course of.

These nuanced insights are largely invisible in single-question assessments.

Wise Evaluation Setup and Benchmark Safety

- REST evaluated 34 LRMs spanning sizes from 1.5B to 671B parameters.

- Benchmarks examined embrace:

- Straightforward: GSM8K

- Medium: MATH500, AMC23

- Tough: AIME24, AIME25, GPQA Diamond, LiveCodeBench

- Model period parameters are set in step with official ideas, with output token limits of 32K for reasoning fashions.

- Using the standardized OpenCompass toolkit ensures fixed, reproducible outcomes.

Conclusion: REST as a Future-Proof, Actual trying LRM Evaluation Paradigm

REST constitutes a significant leap forward in evaluating big reasoning fashions by:

- Addressing Benchmark Saturation: Revitalizes current datasets with out expensive full replacements.

- Reflecting Precise-World Multi-Course of Requires: Checks fashions beneath cheap, extreme cognitive load circumstances.

- Guiding Model Enchancment: Highlights the importance of teaching methods like Long2Short to mitigate overthinking and encourage adaptive reasoning focus.

In sum, REST paves one of the best ways for further reliable, sturdy, and application-relevant benchmarking of next-generation reasoning AI methods.

Strive the Paper, Enterprise Internet web page and Code. All credit score rating for this evaluation goes to the researchers of this mission. SUBSCRIBE NOW to our AI E-newsletter

Sajjad Ansari is a final yr undergraduate from IIT Kharagpur. As a Tech fanatic, he delves into the wise functions of AI with a cope with understanding the impression of AI utilized sciences and their real-world implications. He objectives to articulate difficult AI concepts in a clear and accessible technique.

Elevate your perspective with NextTech Data, the place innovation meets notion.

Uncover the latest breakthroughs, get distinctive updates, and be a part of with a world group of future-focused thinkers.

Unlock tomorrow’s traits at current: study further, subscribe to our e-newsletter, and develop to be part of the NextTech group at NextTech-news.com

Keep forward of the curve with NextBusiness 24. Discover extra tales, subscribe to our publication, and be a part of our rising group at nextbusiness24.com